AI Detectors and Your Kid's Teachers

AI detectors don't work, and parents need to be ready to back their kids if these detectors are in use in the classroom.

If you are the parent of a student who writes in school, please be prepared to have the conversation with their teacher about whether or not they are using so-called “AI detectors” in their evaluation of homework.

Why? Because AI detectors do not work. (The author of the linked blog is a professor at Wharton and studies entrepreneurship and innovation, and is generally on top of this whole AI thing.)

Homework is changing, and some teachers are likely to try to cling to the ways of old - for years, possibly. But one thing is certain - some kids will use AI, some won’t, and you will not currently be able to tell with certainty from AI detectors.

We’re in this liminal space where LLMs aren’t widely understood or universally accepted, particularly at school - so we’re going to be bumping into some turbulence.

Some of that turbulence will come from AI detectors.

It is impossible to detect AI writing (with the possible caveat of if the essay starts with, “As an AI language model…”). But generally speaking (and as Mollick points out in his article), detectors either don’t work well, and the ones that do are easily fooled by changing some words in the essay.

It’s not hard to get an LLM to write great content if you know what you’re doing. People who think they “know AI writing when they see it” are thinking about AI writing that’s generated by a simple prompt like “write me an essay on the topic of toilet paper during the pandemic.” But with some back and forth conversation with the LLM, the content generated improves dramatically, and is not easily detectable.

Not to mention editing! Some tactical edits to a paper written by AI will make it far more likely to be perceived as written by a human.

But that’s all about the kids who are using it and you can’t tell. Probably more importantly, the likelihood of false positives trying to use AI to detect AI is pretty high (like the Texas A&M prof who flunked more than half his class because ChatGPT falsely reported writing their finals … this is a pretty bad way to try to detect AI writing, but many of the AI detectors aren’t much better).

This 👆 is my concern. This 👆 is where parents need to be ready.

A bunch of college seniors ought to be well equipped to handle this scenario.

But what about an anxious high school sophomore? Or a preteen middle schooler?

Parents need to be knowledgeable enough that if their kid says “but I wrote it!” and the teacher says they didn’t because AI said they didn’t, that they shouldn’t automatically side with the “AI detector.”

So … how could you ever possibly prove that your kid wrote what they said they wrote?

First, having the conversation before the kid is accused of anything is a good place to start, checking in with your kid’s teacher’s policies and understanding of AI. If they’re planning to use AI detectors, then do some reasearch, and show them how easily AI detectors can be defeated.

Next, make sure your kid is writing papers in something with a document history like Google Docs. You can review the revision history down to minute detail (no pun intended).

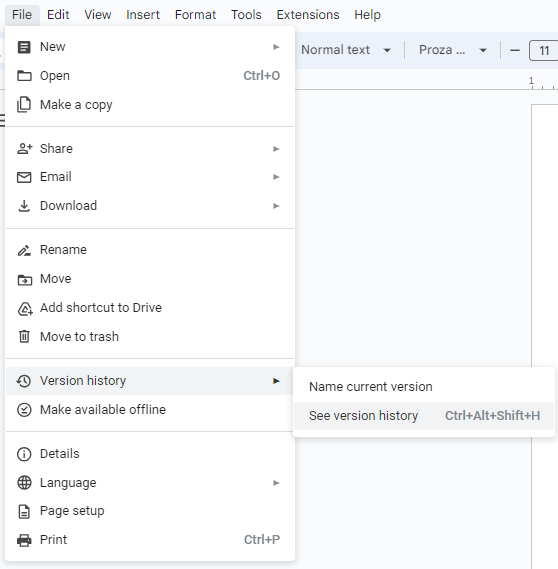

Click “see version history”:

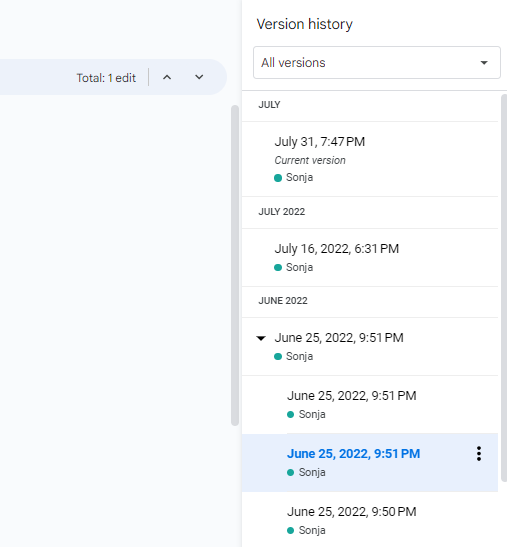

To reveal the version history sidebar:

Cruising the edits will quickly show you whether your student was pasting in large blocks from ChatGPT, or whether they were painstakingly writing their own essay.

Remember “show your work” in math? How, if you did it with the calculator, you didn’t really have that history of how you got your answer? This is just like that. If a teacher forbids AI use in the classroom, students should hang on to their edit history … for their own sake.

…I imagine a creative kid could type in their essay manually from the LLM. But are they going to fake edits? Are they going to fake their way through changing paragraphs, writing something new, moving things around? This is further evidence of them writing it, or not. If they do go to such lengths, there’s a different conversation to be had and LLMs aren’t the topic anymore.

My hope for the long term, is that teachers will quickly embrace teaching kids to effectively write with LLMs, and not restrict AI from the classroom all together. Learning how to collaborate with AI is going to be a life requirement as it is integrated into more and more functionality of our tech.

And don’t fret - kids aren’t going to suddenly lose their ability to think critically, though they may apply it in a new direction - how they engage with the AI, how to decide what to keep, what to rewrite, what to do from scratch, etc. They’re also likely to learn from working with AI, when done well, and when guided on how to do so.

… and isn’t that the point?